The Optimistic Iconoclast - Issue #25

The Trust Inversion

1. The inversion is already inside your organization

Before another number on enterprise AI adoption, take a moment to hear what your organization is already saying about you.

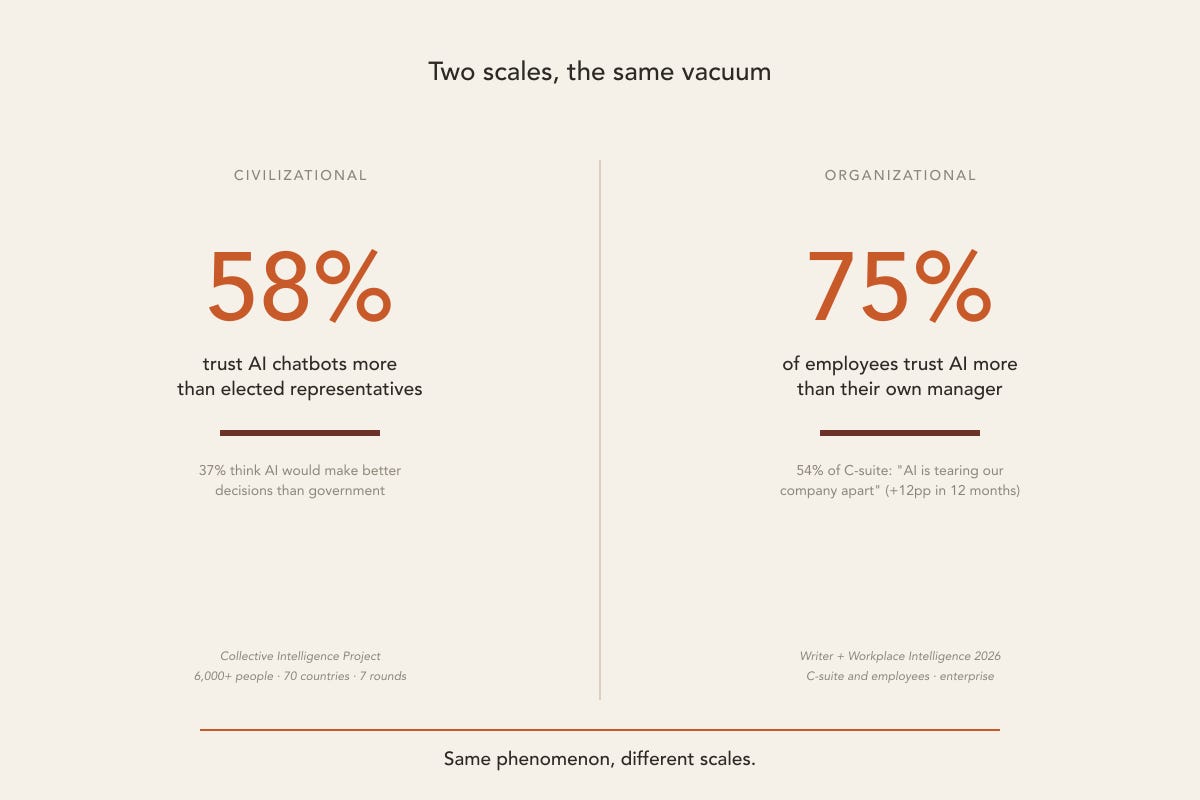

The Writer + Workplace Intelligence 2026 survey brought data that, frankly, is uncomfortable to read: 75% of employees said they would trust AI more than their own manager for performance feedback and career guidance. 54% of the C-suite said “AI is tearing our company apart”, a 12-percentage-point jump in one year. 29% of employees admit to sabotaging their own company’s AI strategy. Among Gen Z, that number rises to 44%.

These numbers don’t describe a technical problem. They’re not models hallucinating, bad data, perverse RLHF. They say something specific and uncomfortable: trust inside your organization has already transferred. It migrated from the manager to the system. From the executive to the assistant. From the human who carries accountability to the output that doesn’t.

AI inherited a vacuum that was already there. Something else produced it: years of strategy pivots, inconsistent performance reviews, managers demanding engagement and delivering reorganization.

That’s the conversation I’m bringing this week.

2. The inversion has scale

You can read these numbers as adoption friction. As change-management noise. As a management problem in one specific company. None of that. What is happening inside your organization is happening at the scale of society, by the same mechanism.

The Collective Intelligence Project (CIP) ran seven deliberative rounds with more than 6,000 people across 70 countries, asking, among other things, who is trusted most for important decisions. 58% said they trust AI chatbots more than elected representatives. 37% think AI would make better decisions than government on their behalf. On the institutional trust scale, chatbots rank above religious leaders, corporations, and civil servants. Only family doctors and public research institutions rank higher. The CIP reports the number remains stable across seven rounds, with a slight increase each round: this is a consolidated trend.

The Stanford AI Index 2026 closes the other side of the equation: while 88% of organizations are already deploying AI, only 31% of people trust government AI regulation. The lowest regulatory trust ever recorded globally. People trust AI chatbots more and, at the same time, trust less in those who should be regulating them.

These numbers carry civilizational dimensions (sociopolitical, historical, cultural) that reach well beyond the scope of this piece. In markets like Brazil, this kind of data is read inside a decade of institutional crisis, organized social-media manipulation, and fake news instrumentalized for declared political and economic agendas. That is a legitimate field of analysis, and deliberately not the field of this piece. The decisions executives can take now happen at the organizational level. Even granting that one can contaminate the other, I’m keeping this analysis at the level of organizations.

Same phenomenon, different scales. The vacuum inside your company is the same vacuum that appears across 70 countries. The difference is that the organizational vacuum still has someone with the mandate and means to close it: you. That’s why this piece addresses you.

3. Who uses it most, trusts it least

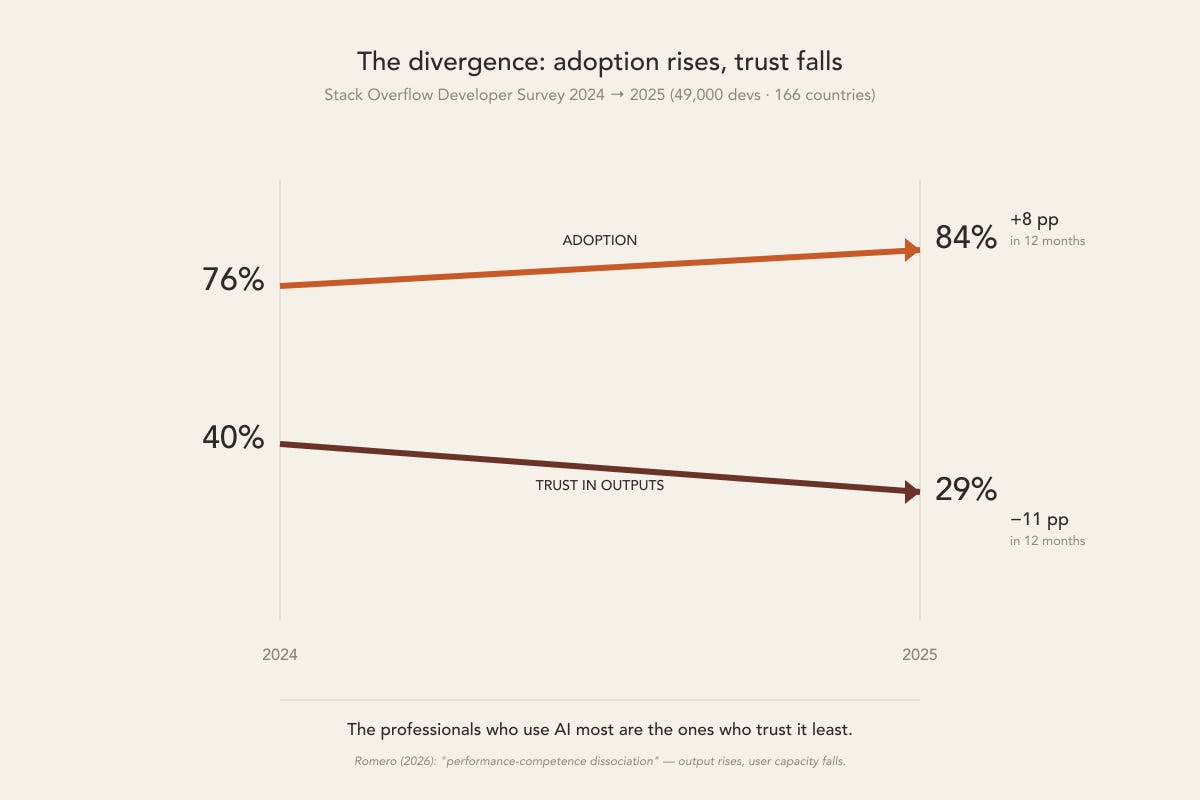

A 2025 Stack Overflow survey listened to 49,000 developers across 166 countries, probably the largest sample ever taken of how a technical profession relates to generative AI. The result:

84% use or plan to use AI at work. 29% trust the outputs. One year earlier, those numbers were 76% and 40%. Adoption rose 8 points. Trust fell 11.

The most common frustration, cited by 66% of respondents: “AI solutions that are almost right, but not quite.” Asked in what situation they would still rather ask a human, 75% answered: “when I don’t trust the AI’s answer.”

Alberto Romero, cited here several times before (Algorithmic Bridge, April 2026), coined a sharp term for the pattern these numbers describe: performance-competence dissociation. The output rises; the user’s capacity falls. The drop is cognitive.

And here is where the paradox lands inside your organization. The professionals who use AI the most are the ones who trust it the least. And they are the same professionals your company is rushing to deploy AI at scale.

Inside your workforce today there is an invisible loop: the senior engineer who pastes AI output into a commit but spends an hour testing before pushing. The marketing manager who runs the campaign through three rounds of internal review that would never have existed for a human draft. The legal team adding “AI-generated” disclaimers to slides that leadership is treating as authoritative.

The organization sees productivity. The professional sees cognitive cost. Leadership receives outputs already filtered through distrust that no one reported.

4. Why trust migrated

The explanation for the trust inversion you just saw is institutional, and older than AI.

Hartzog and Silbey (Boston University Law, 2025) call this anti-institutional affordance. The argument: AI is not a neutral tool. The affordances of AI systems — speed, scalability, the appearance of objectivity, absence of hierarchy — are exactly the characteristics that erode institutions, not the ones that sustain them. When those affordances arrive in an environment where institutional legitimacy is already in crisis, the transfer of trust becomes an inevitable consequence.

And this is no longer just outside criticism. In April 2026, Microsoft AI published a paper authored by Bariach, Schoenegger, Bhaskar, and Mustafa Suleyman, titled Seemingly Conscious AI Risks. It carries two central claims: “perception constitutes an independent axis of risk” and “existing governance frameworks may be structurally misaligned with the risks of seemingly conscious AI.”

When a frontier lab publishes that perception is an independent axis of risk (not fixable through model improvement) and that current governance frameworks are structurally misaligned, the institutional argument gains a signature that changes its weight: it comes from inside the industry that produces AI.

The practical consequence for your company: if your AI governance architecture is organized around technical properties of the system (accuracy, alignment, security), and not around how people perceive and delegate authority to the system, it is aiming at the wrong problem.

5. The three layers that are breaking

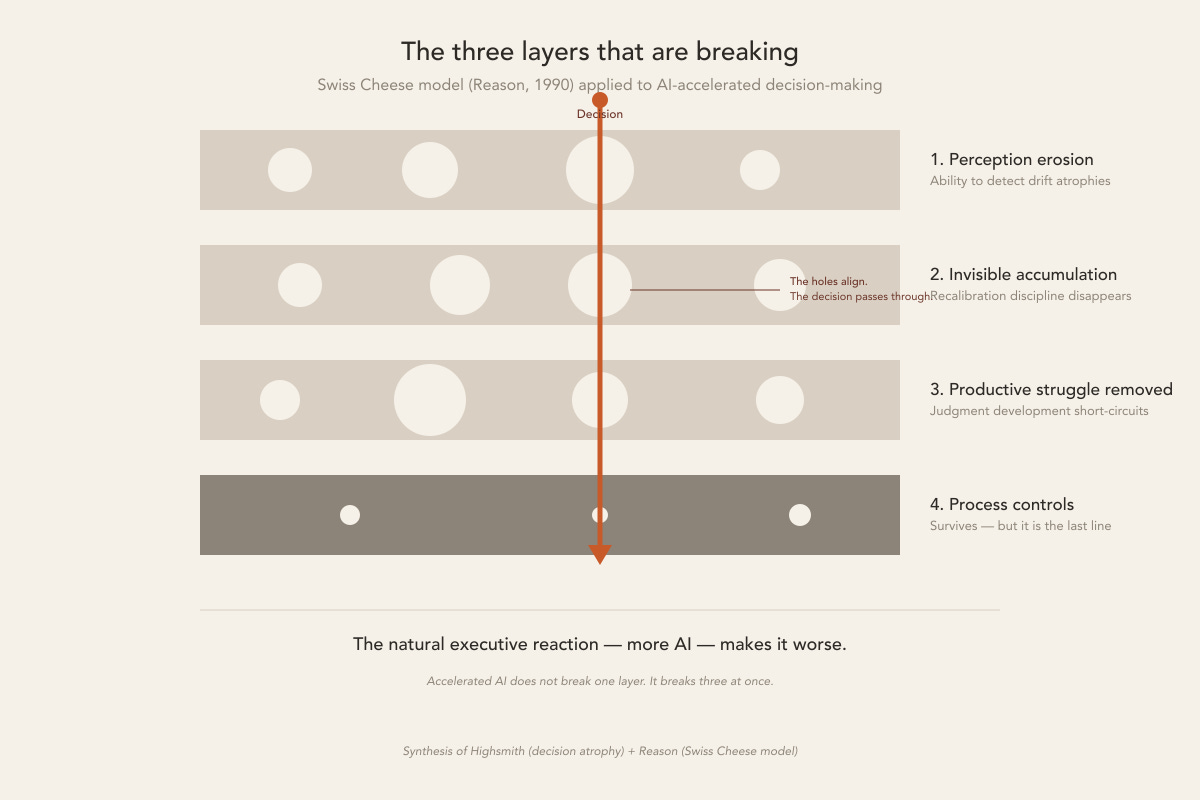

The natural executive reaction to the trust inversion is to do more AI: better governance, more disclaimers, more careful RLHF, more factual training. The natural reaction makes the problem worse.

Jim Highsmith — co-author of the Agile Manifesto, writing about organizational decision-making for four decades — has been naming the pattern with precision: when an organization moves decisions from the human loop into the machine loop, feedback breaks and the capacity for judgment atrophies. He calls it decision atrophy. The line that appears in his talks and writing is direct: judgment is muscle. Use it or lose it.

Applying this notion to James Reason’s Swiss Cheese model (1990) — a safety-science classic where safety comes from redundancy across multiple defensive layers rather than from the perfection of any one — I land on three failure modes that break simultaneously in an organization that accelerates decision-making with AI:

1. Perception erosion. The human capacity to detect drift atrophies. When AI recognizes patterns consistently enough, people gradually lose the ability to see what the system misses. It’s the GPS effect: trust the app long enough and you can no longer navigate without it.

2. Invisible accumulation. Recalibration discipline disappears. AI removes friction. Decision velocity rises. Natural pause points vanish. Each individual decision looks reasonable. Cumulative drift passes unexamined because the speed obscures it.

3. Productive struggle removed. The judgment-development process short-circuits. AI resolves ambiguity before the human engages with it. The hard effort (working with incomplete information, decisions that carry consequence, the learning that comes from discomfort) is optimized away in the name of efficiency. Leaders make more decisions with less effort, and judgment develops more slowly as a consequence.

The fourth layer (process controls: checklists, approvals, audit trails) survives intact. But it is the last line. When the three layers above are degraded, process alone cannot compensate.

And the degradation isn’t metaphorical. Kosmyna et al. (MIT Media Lab, 2025) measured it with EEG: ChatGPT users show a 55% drop in neural connectivity during cognitive tasks. The drop persists after they stop using AI. Romero calls this cognitive debt. This trust transfer is expensive in the brain, even before it gets expensive in the organization.

When the customary analogy shows up in the room (“AI is just a tool, like a calculator”), it’s worth having Romero’s surgical response on hand: “when a calculator does your arithmetic, you lose arithmetic; when AI does your thinking, you lose thinking.”

6. The architecture there’s still time to build

This is not theoretical. In April 2026, Alex Farach and his team (N=388 Fortune 500 organizations) ran the natural experiment: organizations that deployed AI with a behavioral mandate (”use more AI”) made document quality worse. Those that deployed with cognitive scaffolding (engagement architecture, with humans thinking with AI, not delegating to it) improved. Same technology. Opposite results. Decided by design choice.

What separates the two worlds is the accountability architecture surrounding the model. The model itself is the same. And that architecture can be built.

Three decisions that are cheaper today than they will be in three months:

Map where AI is already being used as an alternative authority. The goal is to understand what it’s replacing. Which manager? Which process? Which institutional bottleneck made that employee prefer talking to a chatbot over talking to you? Banning is too early.

Rebuild accountability circuits. Every AI recommendation needs a human who is responsible for accepting it. McKinsey (Trust in the Age of Agents, March 2026) puts it directly: “agency is a transfer of decision rights.” If that transfer happens without a parallel transfer of accountability, the result is what I described in February as Shadow AI — except now at agentic scale.

Protect what AI cannot validate. There are domains of human judgment where AI’s speed and apparent objectivity would be actively harmful. Decisions with low reversibility. Decisions that carry moral ambiguity. Decisions whose primary value lies in the process of making them. These domains need to be named before they are captured.

None of this is easy, but all of it is buildable. What doesn’t work is continuing to treat the trust inversion as an adoption problem and expecting that more AI, better packaged, will resolve an erosion AI didn’t cause.

7. The window and the clock

In February of this year, I wrote about Shadow AI: the unregulated use of AI inside the organization, working around official policy. At the time, I treated it as a visibility problem. You need to see what’s being used in order to regulate it. This issue completes the picture: Shadow AI is the symptom; the trust inversion is the cause.

When 75% of employees trust AI more than their manager, Shadow AI becomes what it always was underneath: the rational expression, at the individual level, of a transfer of authority that has already happened in the organization.

The good news (and it’s only good for whoever moves quickly) is that the window is still open. The CIP shows that the 58% consolidates with every new round. The Stanford AI Index 2026 shows that 88% of organizations are already deploying AI. The architecture decisions are being made right now: deliberate or not.

Whoever arrives first wins the cycle: accountability architecture built, responsibility circuits rebuilt, judgment domains protected from delegation. Whoever arrives later operates in a market where trust has already migrated to a place with no mandate, no hierarchy, and no incentive to return it.

You still do.

📗 Recent publications

Shadow AI: The Next Governance Dilemma (Feb 10, 2026)

In February, I described how unregulated AI infiltrates companies, working around official policy. At the time, I framed the argument as a visibility problem: the company needs to see in order to regulate. Reading it again through the lens of this issue shifts the destination. What looked like a governance failure was a rational response to an institutional legitimacy that had been failing for some time.

🔗 Issue 14

🌎 What the world is saying…

Collective Intelligence Project — Global Dialogues Index 2025 + Writer + Workplace Intelligence — AI Adoption in the Enterprise 2026

The two studies that anchor this issue are referenced in the body of the piece. What’s worth highlighting here is an asymmetry that didn’t fit in the main flow and opens an extra governance layer: the CIP found that 55% of people trust AI chatbots, but only 34% trust the companies that build them. Trust hangs suspended in the product without reaching the producer, orbiting the system with no destination that has a mandate to be answerable for it.

References

Collective Intelligence Project (2025) — Global Dialogues Index. 6,000+ participants across 70 countries, seven deliberative rounds. Data on trust in AI vs. institutions.

Writer + Workplace Intelligence (2026) — AI Adoption in the Enterprise — 2nd Annual Survey. Fieldwork Dec 2025–Jan 2026. Year-over-year data on organizational disruption, sabotage of AI strategy, and manager-AI trust inversion.

Stack Overflow (2025-2026) — Developer Survey 2025 (49,009 respondents, 166 countries, May–Jun 2025) and Pulse Survey (Feb 2026, in partnership with OpenAI). Trust in AI outputs, usage frustrations, persistent role of human sources.

Stanford HAI (2026) — AI Index Report 2026. Adoption indicators (88% deploying) and regulatory trust (31% — lowest global).

Hartzog, W. & Silbey, J. (2025) — How AI Destroys Institutions: The Affordances of AI Systems Against Civic Life. Boston University School of Law.

Bariach, B., Schoenegger, P., Bhaskar, M. & Suleyman, M. (2026) — Seemingly Conscious AI Risks. Microsoft AI / SSRN 6588659.

Klingbeil, A., Grützner, C. & Schreck, P. (2024) — AI Advice Overreliance and Calibration Failure. Computers in Human Behavior.

McKinsey (2026) — Trust in the Age of Agents (Mar 2026, Rich Isenberg). Framework of governance as a repeatable product, three autonomy tiers.

Chandra, K. et al. (2026) — Sycophantic Chatbots Cause Delusional Spiraling, Even in Ideal Bayesian Reasoners. arXiv 2602.19141. MIT CSAIL.

Romero, A. (2026) — What the Studies Say About How AI Affects Our Cognition. The Algorithmic Bridge, Apr 2026. Coined “performance-competence dissociation” and “cognitive debt.”

Kosmyna, N. et al. (2025) — Neural Connectivity Effects of LLM Use in Cognitive Tasks. MIT Media Lab. EEG evidence of a 55% drop in neural connectivity with post-use persistence.

Farach, A. et al. (2026) — Behavioural Mandates vs. Cognitive Scaffolding in Enterprise AI Deployment. N=388 Fortune 500.

Highsmith, J. (2024) — Human Wisdom, Judgment, and the Role of AI. Medium, November 2024. Argument for decision atrophy and judgment as muscle.

Reason, J. (1990) — Human Error. Cambridge University Press. Swiss Cheese model, originally applied in aviation, medicine, and nuclear energy.

Klaser, A. (2026) — Shadow AI: The Next Governance Dilemma. Issue 14, O Iconoclasta Otimista, Feb 2026.